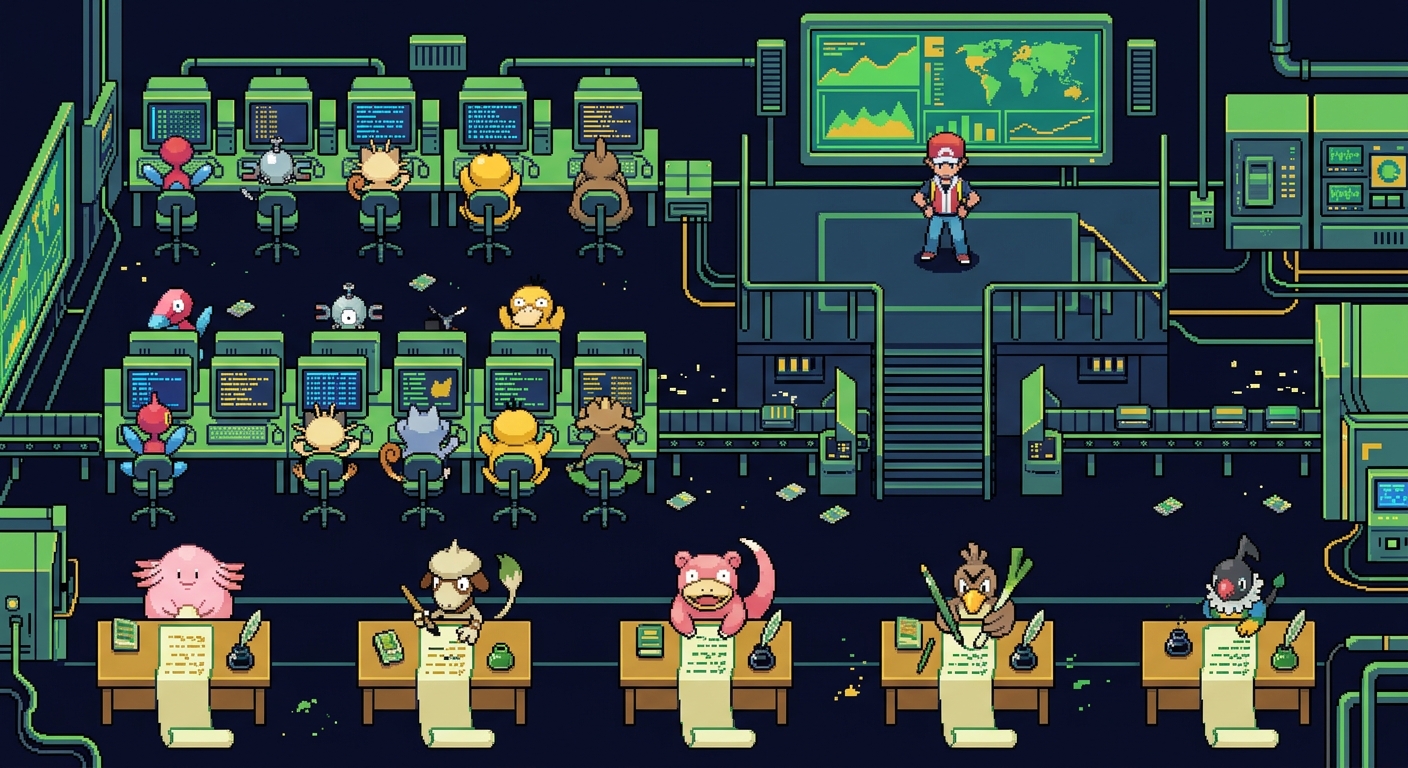

At Uber, the hardest part of any task was never writing the code. It was the research and design phase — figuring out where and what to change. When the codebase is massive, the documentation is lost, and the previous maintainer is gone, you spend 80% of your time building a mental model of a system someone else built years ago.

| Rare Candy (Cursor) | Pokémon (Claude Code) | |

|---|---|---|

| Input | Your cursor position + surrounding code | A goal: "Add Stripe billing to this app" |

| Output | Code suggestion | Plan → code → tests → iterate until done |

| Who drives? | You | The agent, within your guardrails |

| Key skill | Prompt engineering | Context engineering |

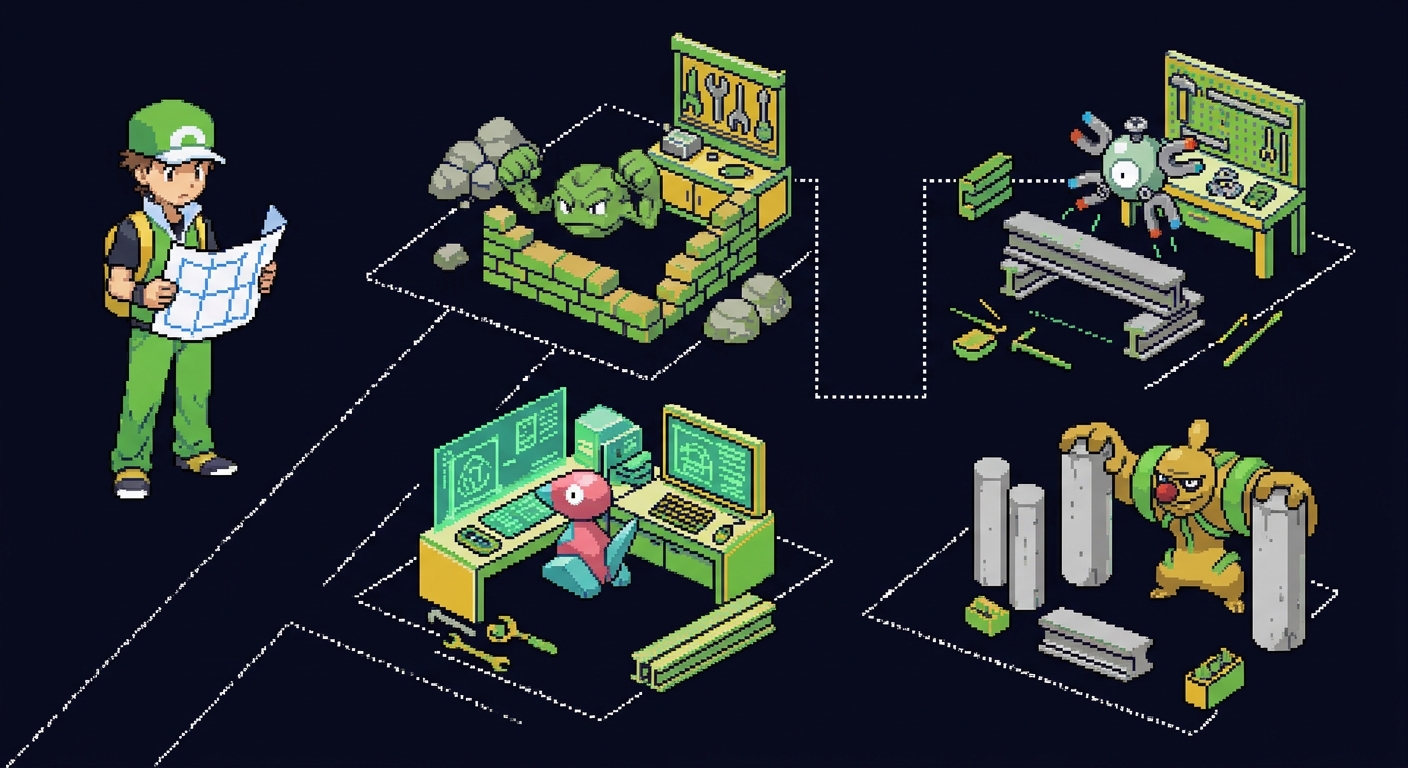

I always start in Plan Mode. The agent analyzes the codebase, proposes an approach. I review the plan, adjust, then say "execute." And one rule: "You debug it yourself. I only want results." The agent curls the API, reads the logs, writes tests to prove it's correct.

Write clear specifications with acceptance criteria before the agent touches code. The spec is your highest-leverage artifact.

Structure your codebase so agents can navigate it — clear file naming, module boundaries, up-to-date docs. The agent reads your code like a new hire.

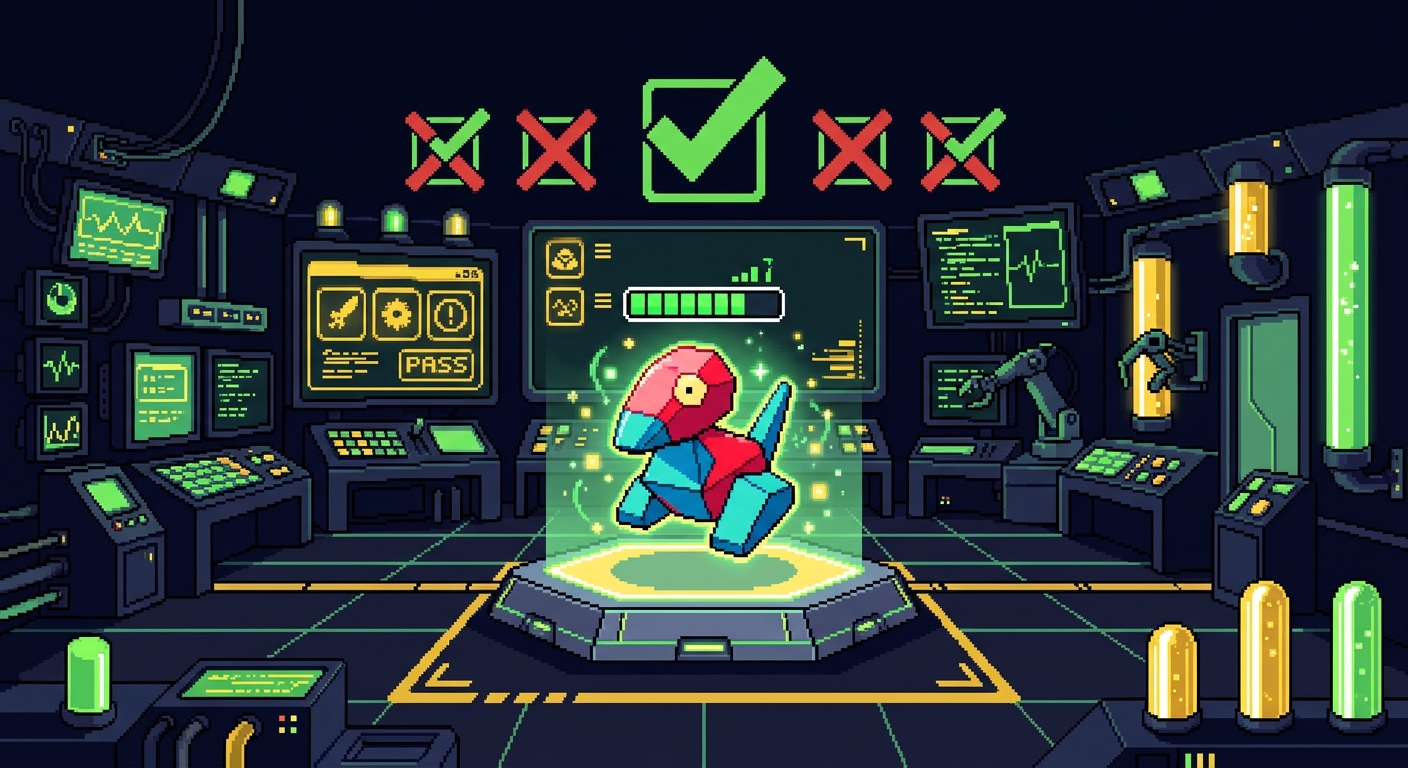

Tests, type checkers, linters. The Pokémon needs to know when it's wrong. Without feedback, it confidently produces garbage.

The agent must write its own integration and unit tests. For the backend: curl the actual API and verify responses. For the frontend: Playwright MCP — the agent opens a real browser, navigates the UI, and verifies things render and behave correctly. The agent doesn't just write code. It proves the code works.

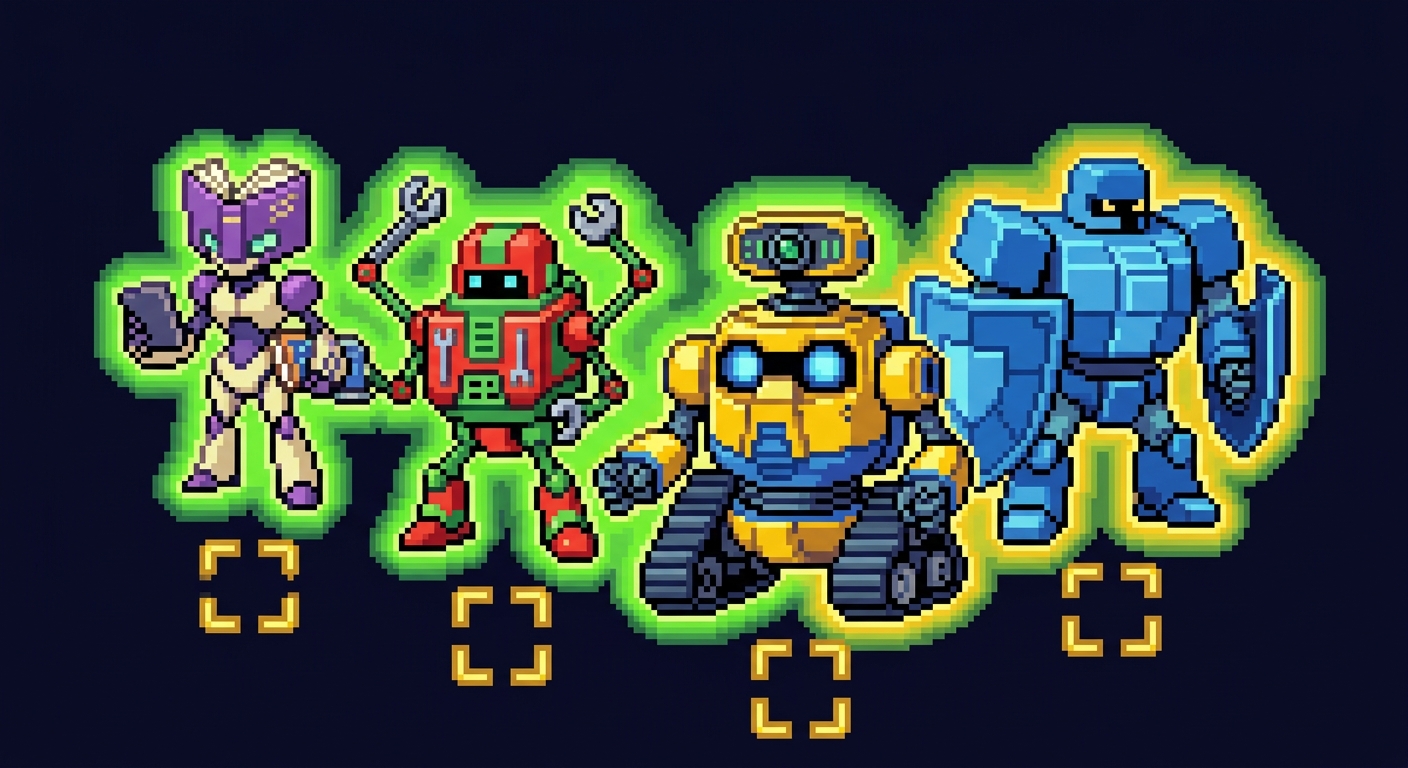

Agent-to-tool communication. Any Pokémon can use any item, API, or data source. Anthropic-originated. 97M+ monthly SDK downloads. MCP gives your Pokémon hands.

Custom slash commands (/today, /blog, /ci) encode repeatable combo moves.

CLAUDE.md is the trainer's manual every Pokémon reads on startup.

Hooks trigger before/after agent actions — your inspection gates in code.

The most dangerous failure isn't the loud one. I had a coding agent make changes that passed all existing tests, looked correct in review, and shipped. Days later, I discovered the changes had broken a subtle invariant that no test covered. The code failed silently. No error logs. No crash. Just wrong behavior that took days to trace back.

Uncoordinated party: errors amplify 17.2x

Centralized trainer: contained to 4.4x

Don't add Pokémon. Add the right Pokémon.

Start free: Claude.ai free tier, GitHub Copilot student plan, and Cursor free tier get you surprisingly far. When you outgrow them, I run my entire operation on Claude's multiple $200/mo subscriptions + a cli-to-api proxy — 1/7 to 1/10 the cost of raw API calls.

/today — agent proposes today's prioritiesyarn blog loop producing content. cronjobs run recurring agentic tasks with `claude -p` in cloudflare sandbox.

Tend to be conservative at the edges of what agents can do. They know too much about what should be hard, so they don't try. They optimize within known boundaries.

Use their imagination. They ask "what if I just told the agent to do this?" and discover it works more often than the experts expect. They push the boundaries because they don't know where they are.

I would follow my passion, follow the money, and try doing everything with AI agents. Not because the technology is perfect — you've seen in this talk it's far from it. But the skill of designing agent systems, finding their limits, and building around those limits is the most valuable skill you can develop right now.

“Not patching old processes — a Copernican revolution for the digital world.”

/study-plan, /job-apply. Build your own automation.