Apache Kafka is a distributed streaming platform.

Why use Apache Kafka?

Its abstraction is a ==queue==, and its features include:

- A distributed publish-subscribe (pub-sub) messaging system that simplifies N ^ 2 relationships into N. Publishers and subscribers can operate at their own rates.

- Ultra-fast zero-copy technology.

- Support for fault-tolerant data persistence.

It can be applied to:

- Logging by topic.

- Messaging systems.

- Off-site backups.

- Stream processing.

Why is Kafka so fast?

Kafka uses zero-copy technology, where the CPU does not perform the task of copying data across storage area replicas.

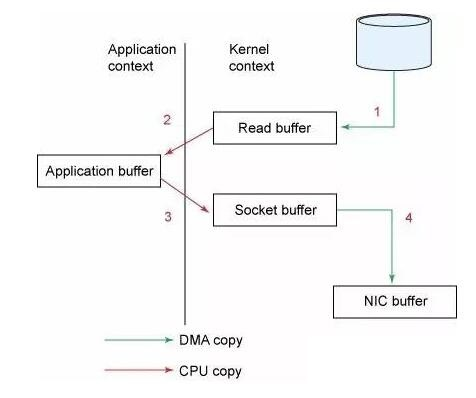

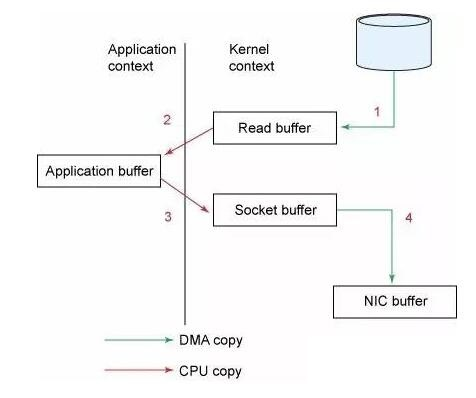

Without zero-copy technology:

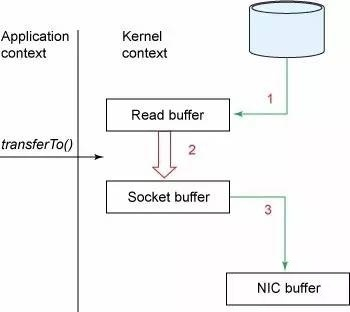

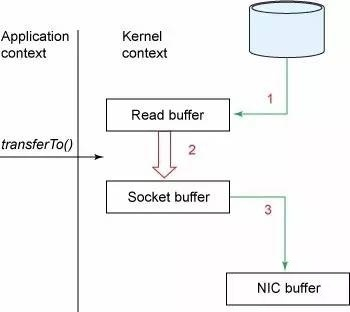

With zero-copy technology:

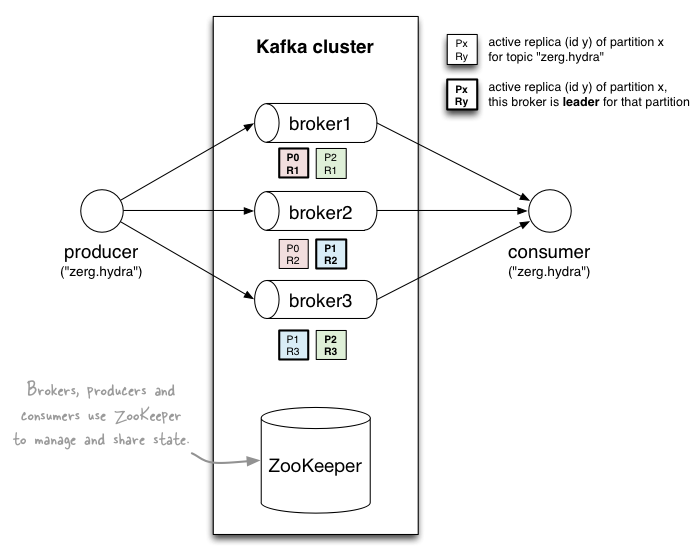

Architecture

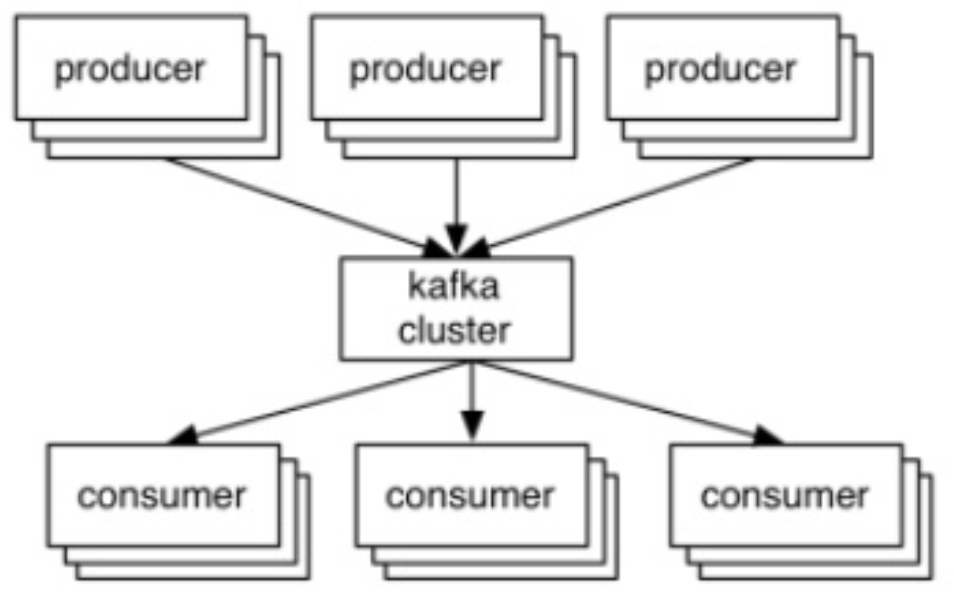

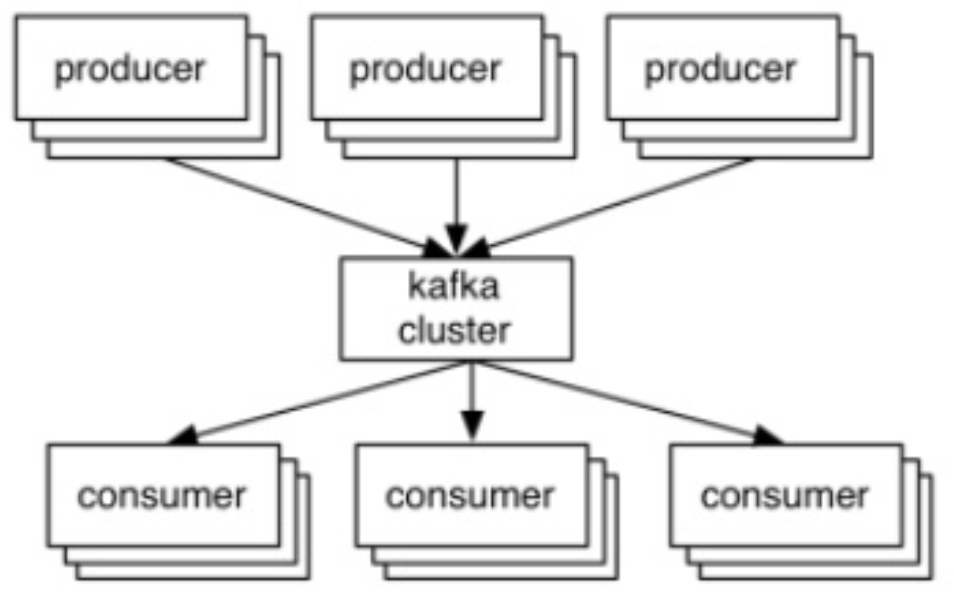

From the outside, producers write to the Kafka cluster, while users read from the Kafka cluster.

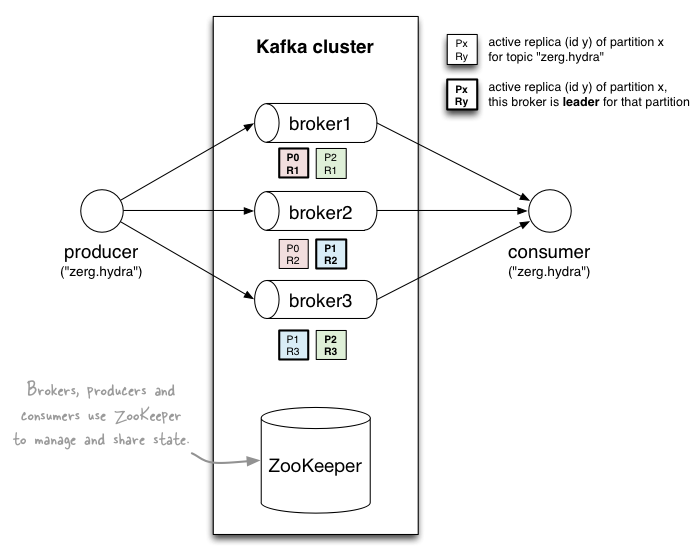

Data is stored by topic and divided into partitions of replicable replicas.

- Producers publish messages to specific topics.

- First, they are written to an in-memory buffer and then updated to disk.

- To achieve fast writes, an append-only sequential write is used.

- Messages can be read only after being written.

- Consumers fetch messages from specific topics.

- They use an "offset pointer" (offset is the SEQ ID) to track/control their unique reading progress.

- A topic includes partitions and load balancing, where each partition is an ordered, immutable sequence of records.

- Partitions determine the parallelism of users (groups). At any given time, a user can read from only one partition.

How to serialize data? Avro

What is its network protocol? TCP

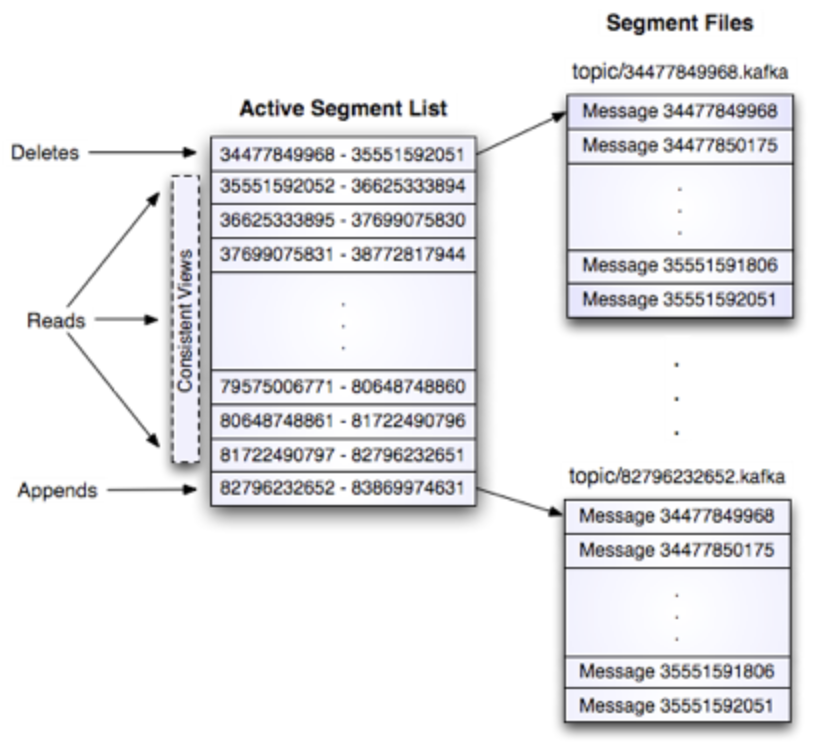

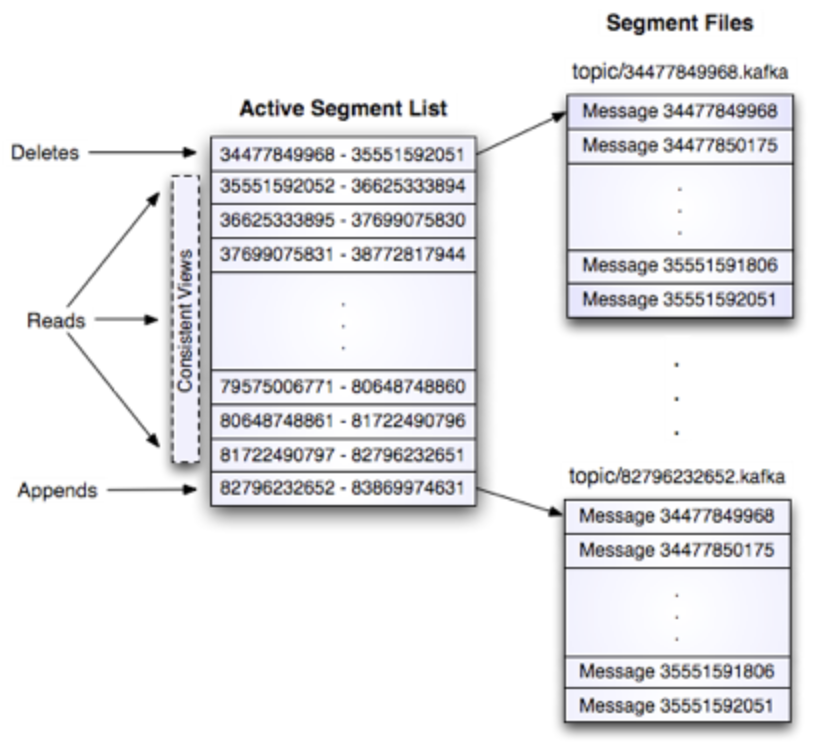

What is the storage layout within a partition? O(1) disk reads.

How does fault tolerance work?

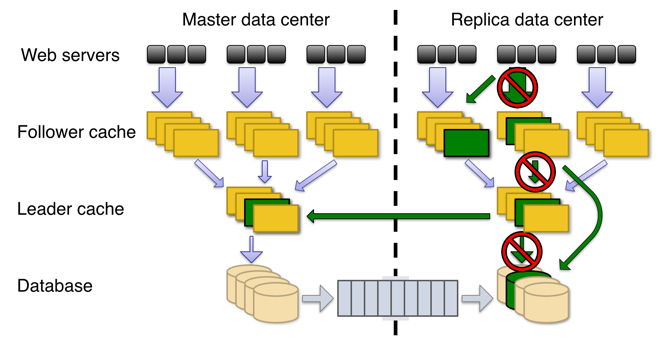

==In-Sync Replicas (ISR) protocol==. It allows (numReplicas - 1) nodes to fail. Each partition has one leader and one or more followers.

Total replicas = In-sync replicas + Out-of-sync replicas

- ISR is a set of live replicas that are in sync with the leader (note that the leader is always in the ISR).

- When publishing new messages, the leader waits to commit the message until it has been received by all replicas in the ISR.

- ==If a follower fails to stay in sync, it will exit the ISR, and then the leader will continue to commit new messages with fewer replicas in the ISR. Note that at this point, the system is operating in a low-replica state.== If a leader fails, another ISR will be elected as a new leader.

- Out-of-sync replicas continuously pull messages from the leader. Once they catch up to the leader, they will be added back to the ISR.

Is Kafka an AP or CP system in the CAP theorem?

Jun Rao believes it is CA because "our goal is to support replication within a Kafka cluster in a single data center, where network partitions are rare, so our design focuses on maintaining high availability and strong consistency of replicas."

However, it actually depends on the configuration.

- If using the initial configuration (min.insync.replicas=1, default.replication.factor=1), you will have an AP system (at most once).

- If you want to achieve CP, you can set min.insync.replicas=2, topic replication factor to 3, and then generate acks=all messages to guarantee CP settings (at least once). However, if there are not enough replicas (replica count < 2) for a specific topic/partition, writing will not succeed.