Introduction to Architecture

What is Architecture?

Architecture is the shape of a software system. To illustrate with a building:

- Paradigm is the bricks.

- Design principles are the rooms.

- Components are the structure.

They all serve a specific purpose, just like hospitals treat patients and schools educate students.

Why Do We Need Architecture?

Behavior vs. Structure

Every software system provides two distinct values to stakeholders: behavior and structure. Software developers must ensure that both values are high.

==Due to the nature of their work, software architects focus more on the structure of the system rather than its features and functions.==

Ultimate Goal — ==Reduce the human resource costs required for adding new features==

Architecture serves the entire lifecycle of software systems, making them easy to understand, develop, test, deploy, and operate. Its goal is to minimize the human resource costs for each business use case.

O'Reilly's "Software Architecture" provides a great introduction to these five fundamental architectures.

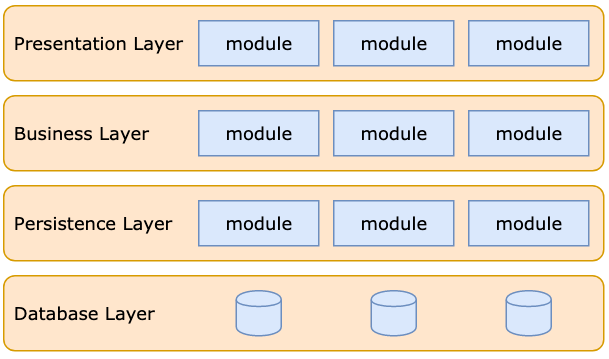

1. Layered Architecture

Layered architecture is widely adopted and well-known among developers. Therefore, it is the de facto standard at the application level. If you are unsure which architecture to use, layered architecture is a good choice.

Examples:

- TCP/IP model: Application Layer > Transport Layer > Internet Layer > Network Interface Layer

- Facebook TAO: Network Layer > Cache Layer (follower + leader) > Database Layer

Pros and Cons:

- Pros

- Easy to use

- Clear responsibilities

- Testability

- Cons

- Large and rigid

- Adjusting, extending, or updating the architecture requires changes across all layers, which can be quite tricky.

- Large and rigid

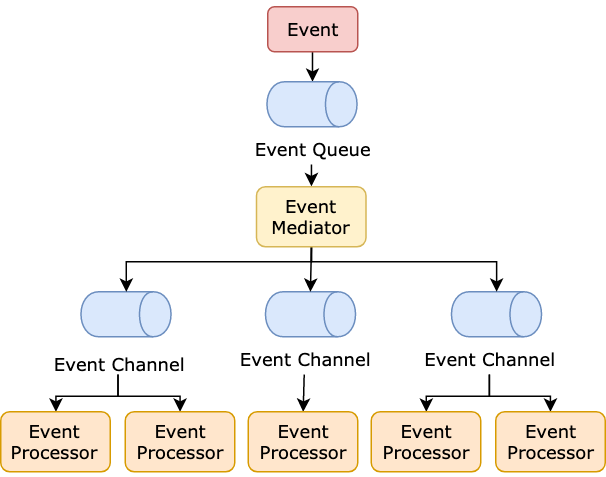

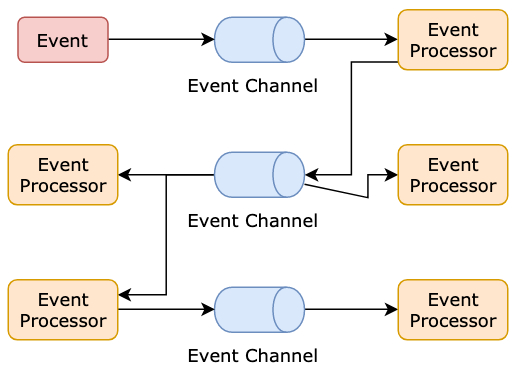

2. Event-Driven Architecture

Any change in state triggers an event in the system. Communication between system components is accomplished through events.

A simplified architecture includes a mediator, event queue, and channels. The diagram below illustrates a simplified event-driven architecture:

Examples:

- QT: Signals and Slots

- Payment infrastructure: As bank gateways often have high latency, asynchronous techniques are used in banking architecture.

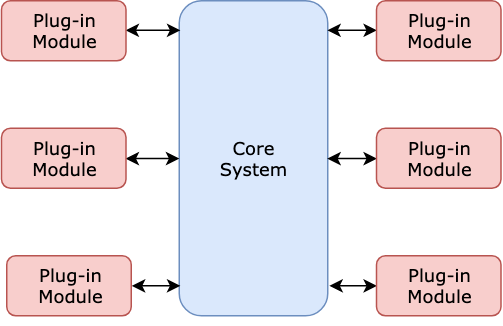

3. Microkernel Architecture (aka Plug-in Architecture)

The functionality of the software is distributed between a core and multiple plugins. The core contains only the most basic functionalities. Each plugin operates independently and implements shared interfaces to achieve different goals.

Examples:

- Visual Studio Code and Eclipse

- MINIX operating system

4. Microservices Architecture

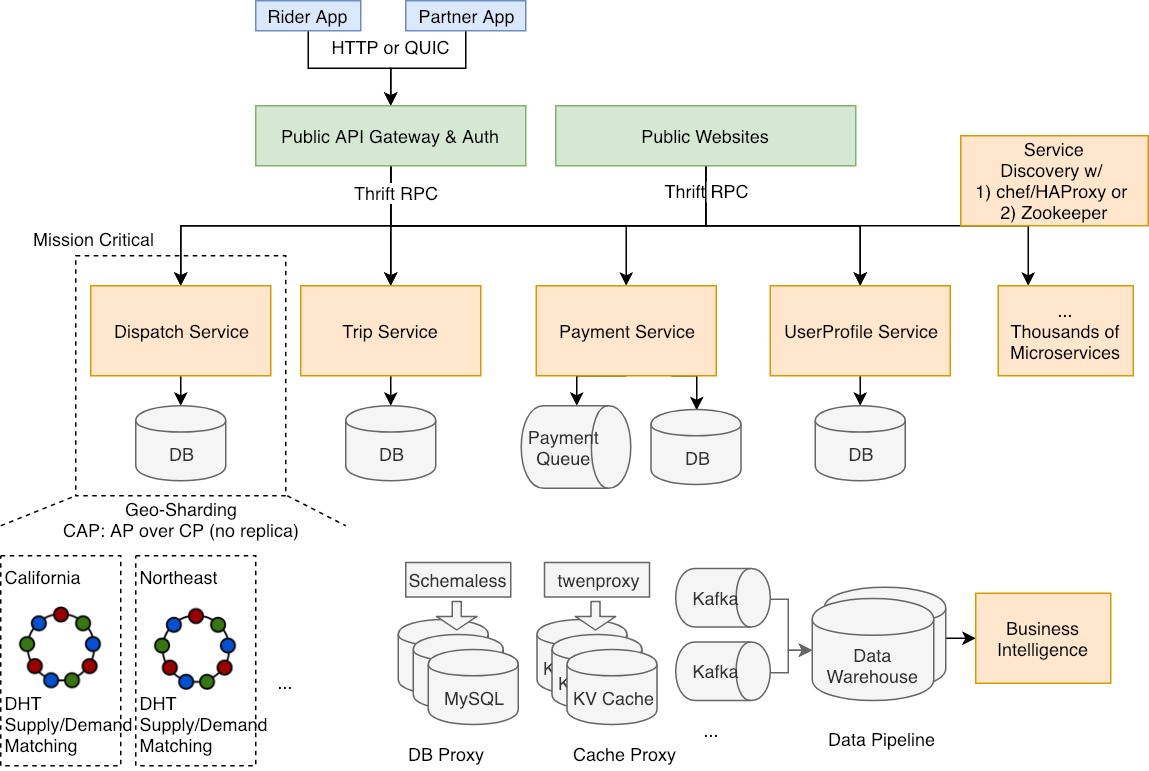

Large systems are decomposed into numerous microservices, each a separately deployable unit that communicates via RPCs.

Examples:

- Uber: See designing Uber

- Smartly

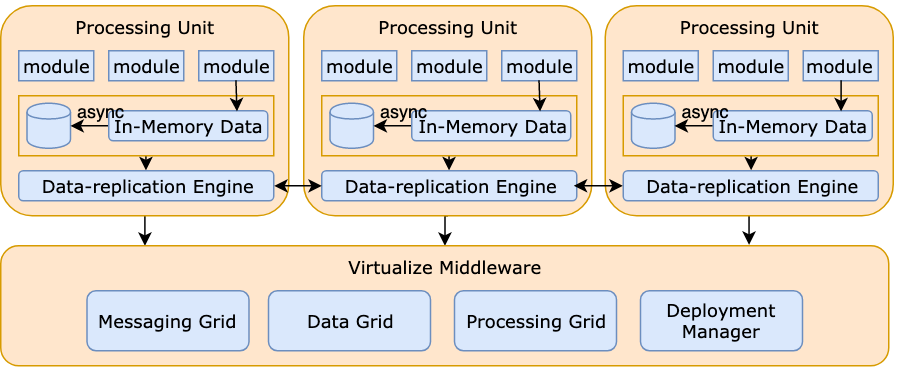

5. Space-Based Architecture

The name "Space-Based Architecture" comes from "tuple space," which implies a "distributed shared space." In space-based architecture, there are no databases or synchronized database access, thus avoiding database bottleneck issues. All processing units share copies of application data in memory. These processing units can be flexibly started and stopped.

Example: See Wikipedia

- Primarily adopted by Java-based architectures: for example, JavaSpaces.